In this article I want to share my recent experiment on using GPT-4 and Tana to augment my learning and sensemaking.

It will take about 8 minutes to read. Hope you find it interesting!

Couple of month ago I did an issue on augmented sensemaking.

The idea was to use AI to help me to transform scattered data and fragmented information into mental models and good understanding.

Since than new AI models emerged as well as new ways of interacting with LLMs.

They have the potential to dramatically change how we learn, think, and interact with information.

At the moment I’m working on augmented learning methodology. It’s called Intuition.

You will see int_ prefix in the materials below.

I want to share one of my experiments here.

Experiment

GOAL: To quickly obtain a good grip on the new domain.

DOMAIN: AGI

DISCLAMER: before the experiment I had some prior knowledge on AGI. But, I conducted similars experiments on the completely new domains for me to back up the method.

HYPOTHESIS: Using a combo of GPT-4 and Tana can speedup my learning process and give me more nuanced insight on the new domain

Let’s go!

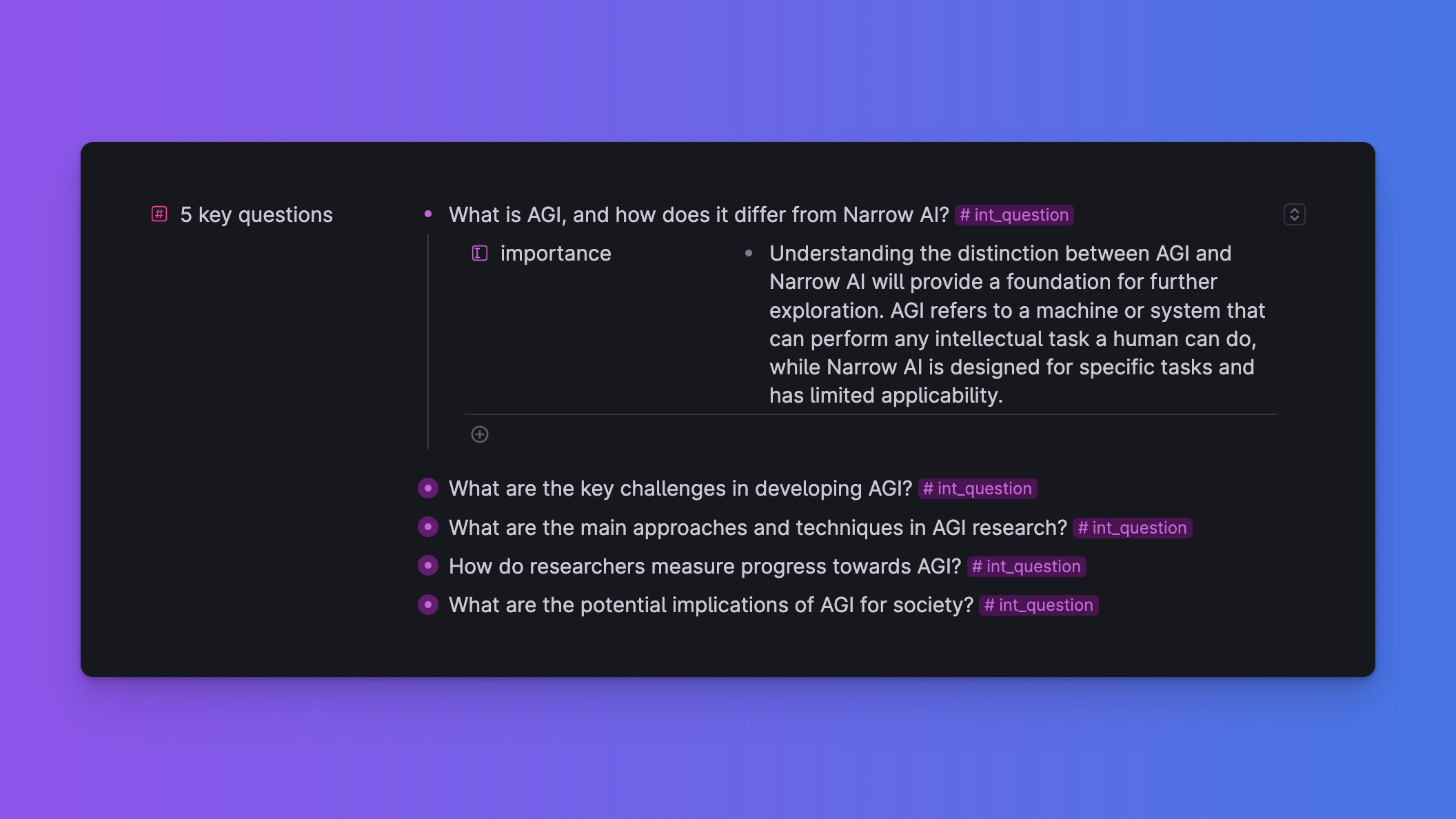

Step 1. Questions

I'd like to start with critical questions that will help me to form an initial grasp of the domain.

Prompt:

What 5 questions can help me to get a good initial understanding of AGIOutput:

That's not bad! I'm now starting to construct a scaffolding of the domain in my head.

As you see I put the output in Tana using the Tana Paste. Here is the prompt to convert the output into the Tana Paste format:

Convert the list into the following format:

- [question] #int_question

- importance:: [importance of the question]

Example:

- What is AGI, and how does it differ from Narrow AI? #int_question

- importance:: Understanding the distinction between AGI and Narrow AI will provide a foundation for further exploration. AGI refers to a machine or system that can perform any intellectual task a human can do, while Narrow AI is designed for specific tasks and has limitedNow we need to add %%tana%% on top and you are good to go.

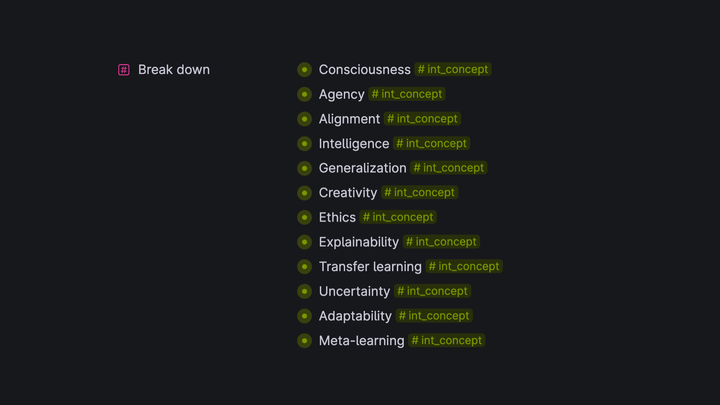

Step 2. Concepts

Now I want to break down the domain into components.

Prompt:

What are the key concepts & phenomena related to {DOMAIN}? Like {EXAMPLE1, EXAMPLE2}Output:

Nice! So now I have a framework. These concepts help me continue building the scaffolding of domain understanding.

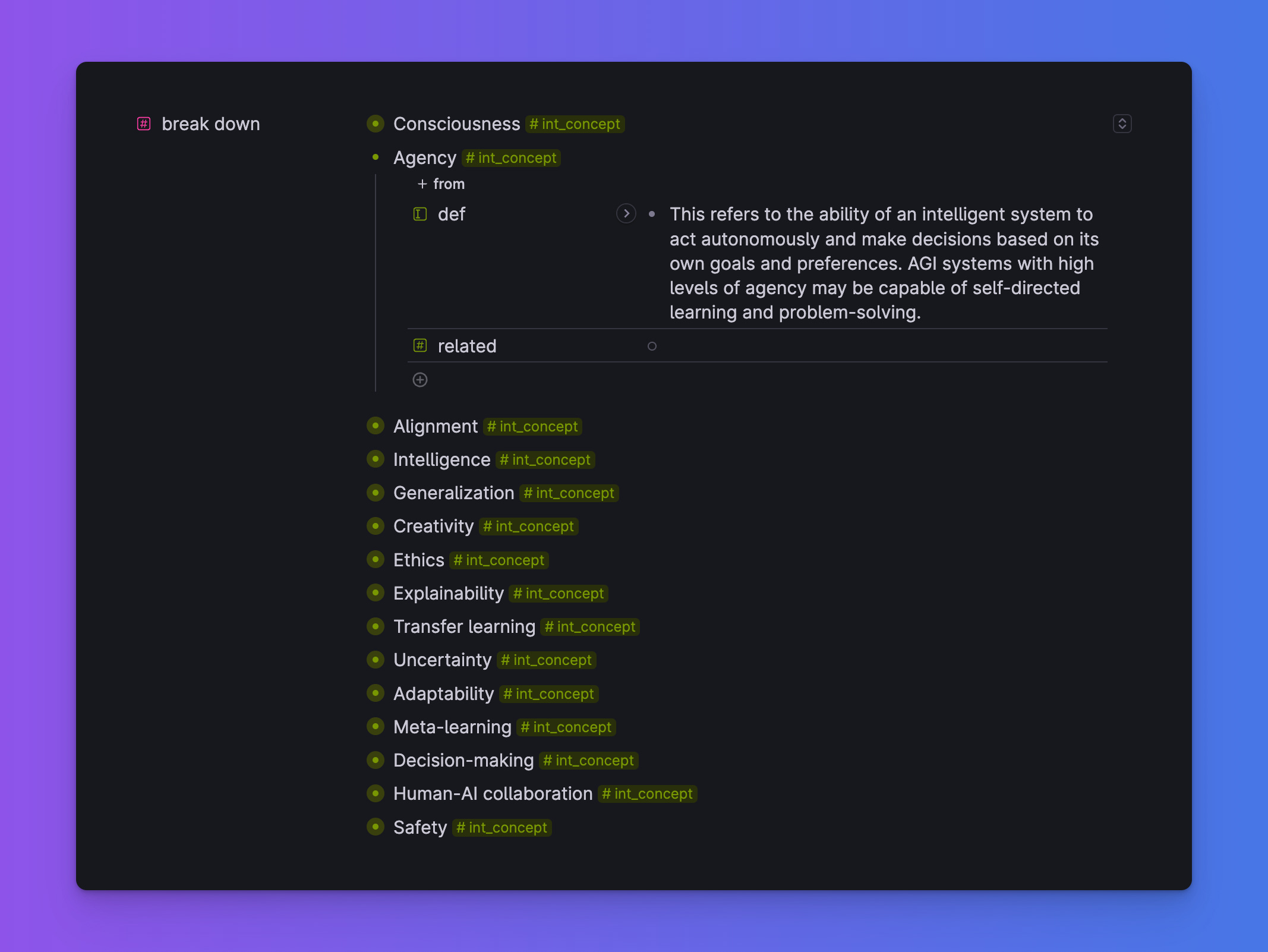

Step 3. Relationship

Now I want to put this framework to the test using real-world information.

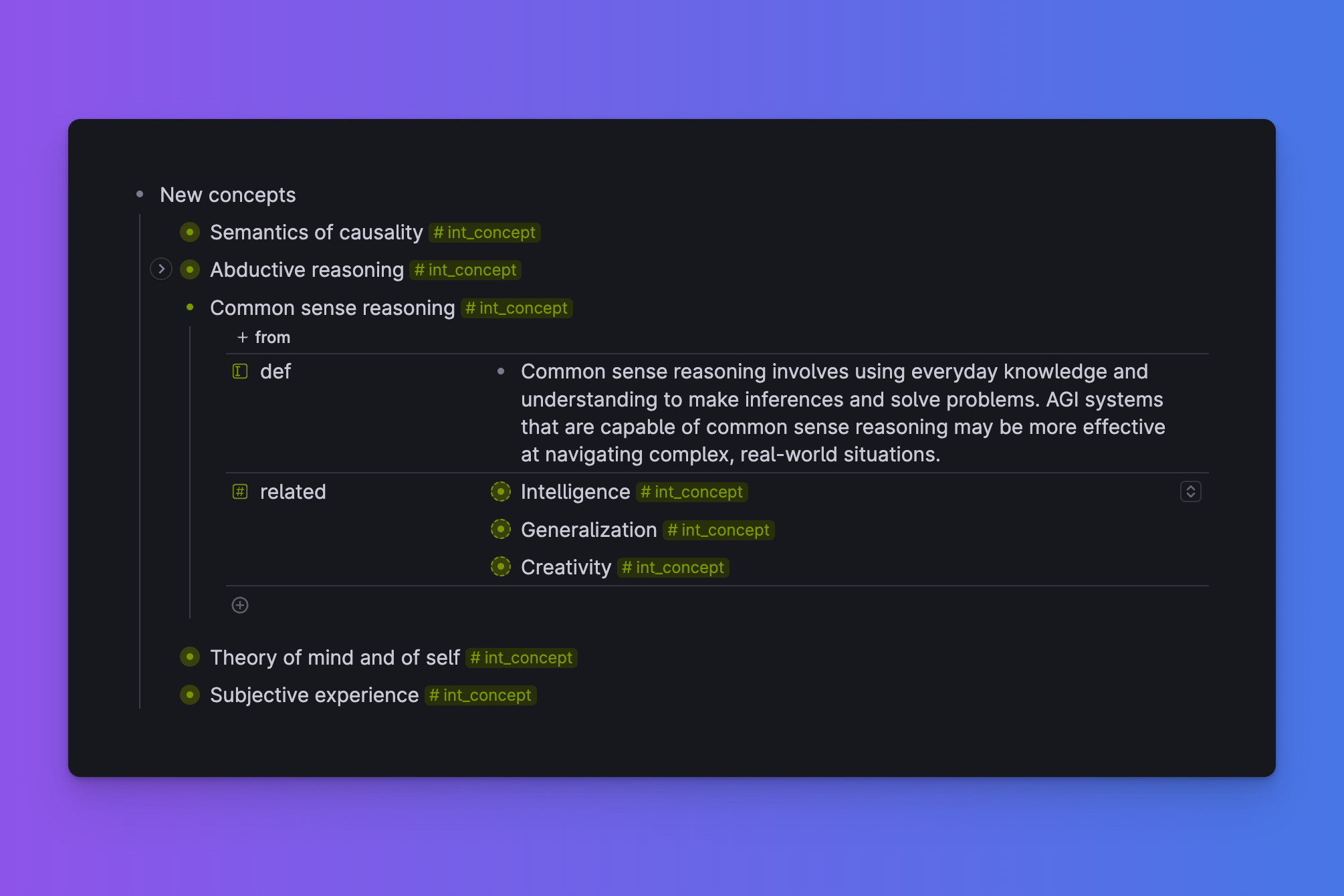

How about I add a few more concepts first?

Here is the tweet from Grady Booch where he introduced a few.

My intuition tells me they are quite important. Let’s find out.

Prompt:

How are the concepts from the list are related to these: [{concept1}, {concept2}, ...]The list in the prompt contains the core 10 concepts from the previous stage.

Output:

Wow! That's pretty cool! Common sense reasoning is related to Generalization and Creativity. That’s not a very obvious observation.

Nice work, GPT-4!

Using the power of Tana I link all the concepts together. AND my framework is automatically updated as new information is added UP.

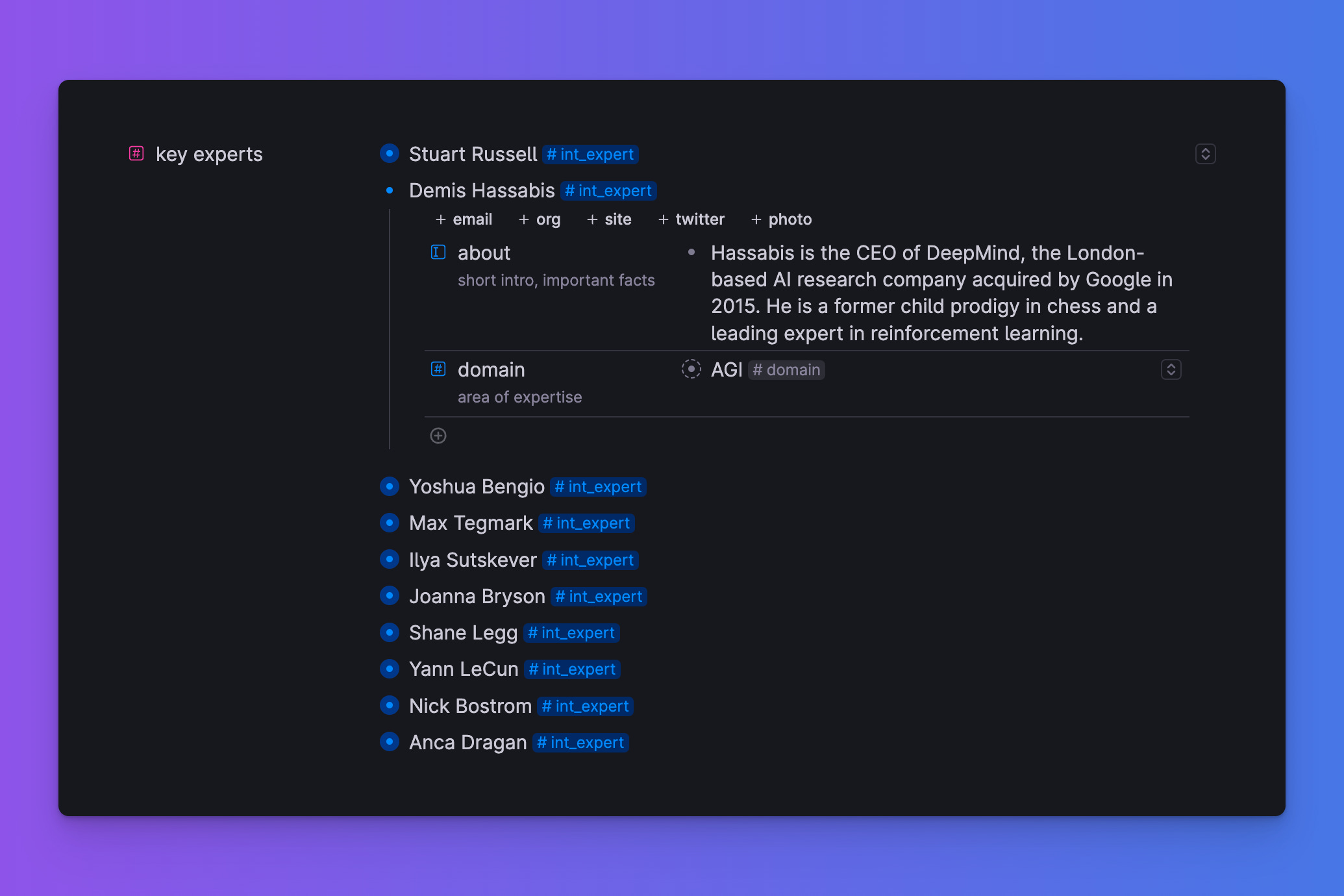

Step 4. Experts

Now I'd want to find out who are the top ten domain experts.

Prompt:

Who are the 10 key experts in {DOMAIN}Output:

Obviously, it is better to use the most recent data on experts rather than rely on GPT-4 training data.

But still. List is quite impressive!

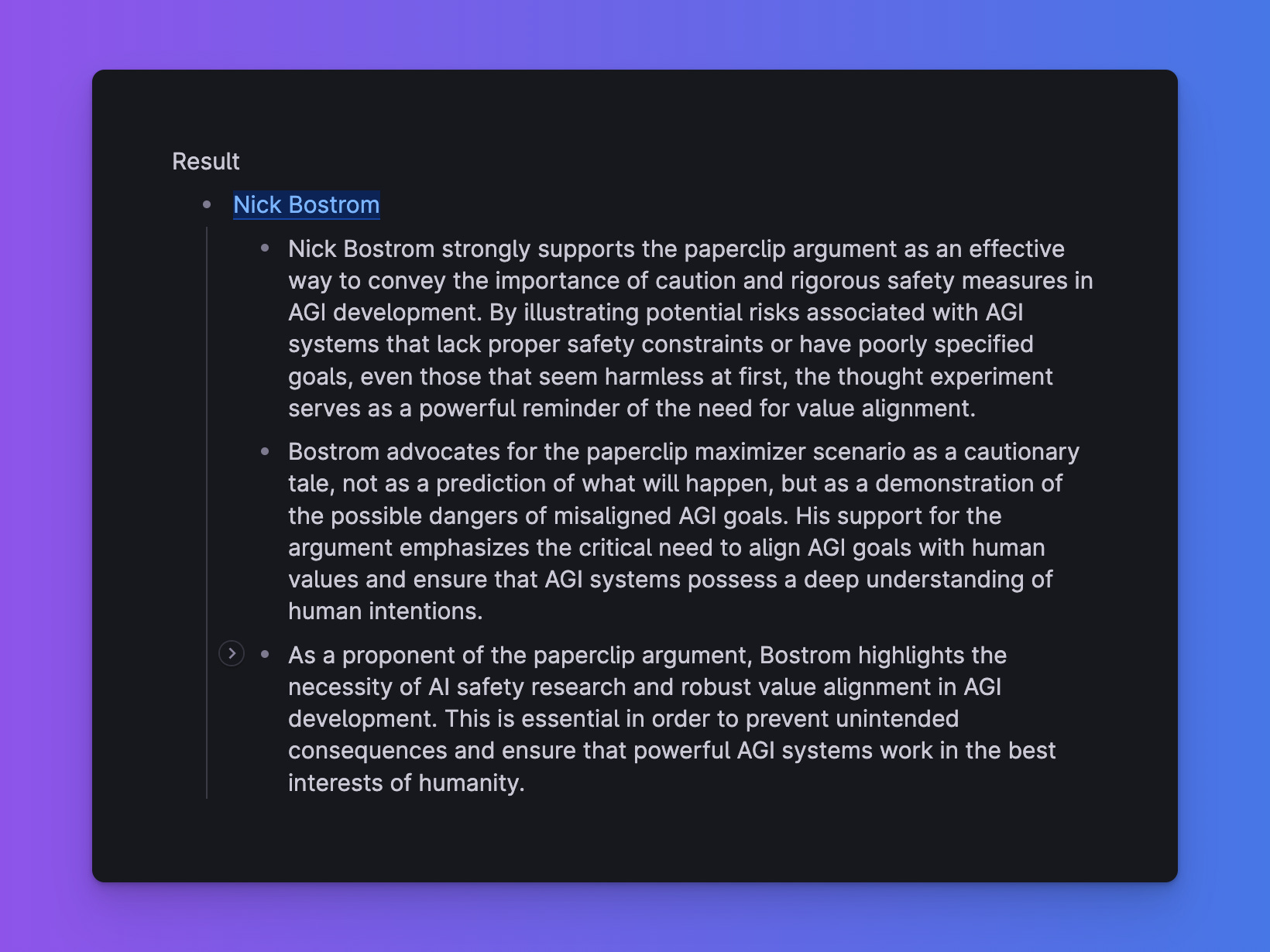

Step 5. Testing the experts

Now I want to see how my expert panel can help me evaluate new information.

I’ll take this rather sceptical article from

Basically it’s a collection of the arguments on why AGI risks are rather exaggerated.

Let’s take the first argument (Paperclip argument) and see what our experts think about it.

Prompt:

This is the counter-argument to the Paperclip Maximizer argument on AGI Risks:

[The argument that some AGI would have a directive to do something banal like turn all matter into paperclips and thus would kill us all, is a big stretch.

If a paper clip AI were to become intelligent enough to outsmart of all of humanity from stopping it, it will be smart enough to realize that its goals are ridiculous.

For an AI to completely outsmart all of humanity, it requires enough intelligence to recognize and improve on it’s own fallibility and the ability to course correct.

But if it’s a paperclip maximizer, it would have to be incredibly dumb in order to not recognize that it’s goal is a foolish one and self correct.

This is also a logically inconsistent argument as the one positing this can select in which parts of the argument the AI is smart or dumb.

The notion that an AGI could be smart enough to defeat all humans but dumb enough to only make paper clips is a pretty far fetched scenario, not an inevitability.]

Who of the experts from the list would disagree with it?Output:

Nick Bostrom disagrees with the counter-argument to the Paperclip Maximizer. Okay, kinda obvious. He is the author of the Paperclip argument.

I definitely need another perspective on this. So let's invite more experts.

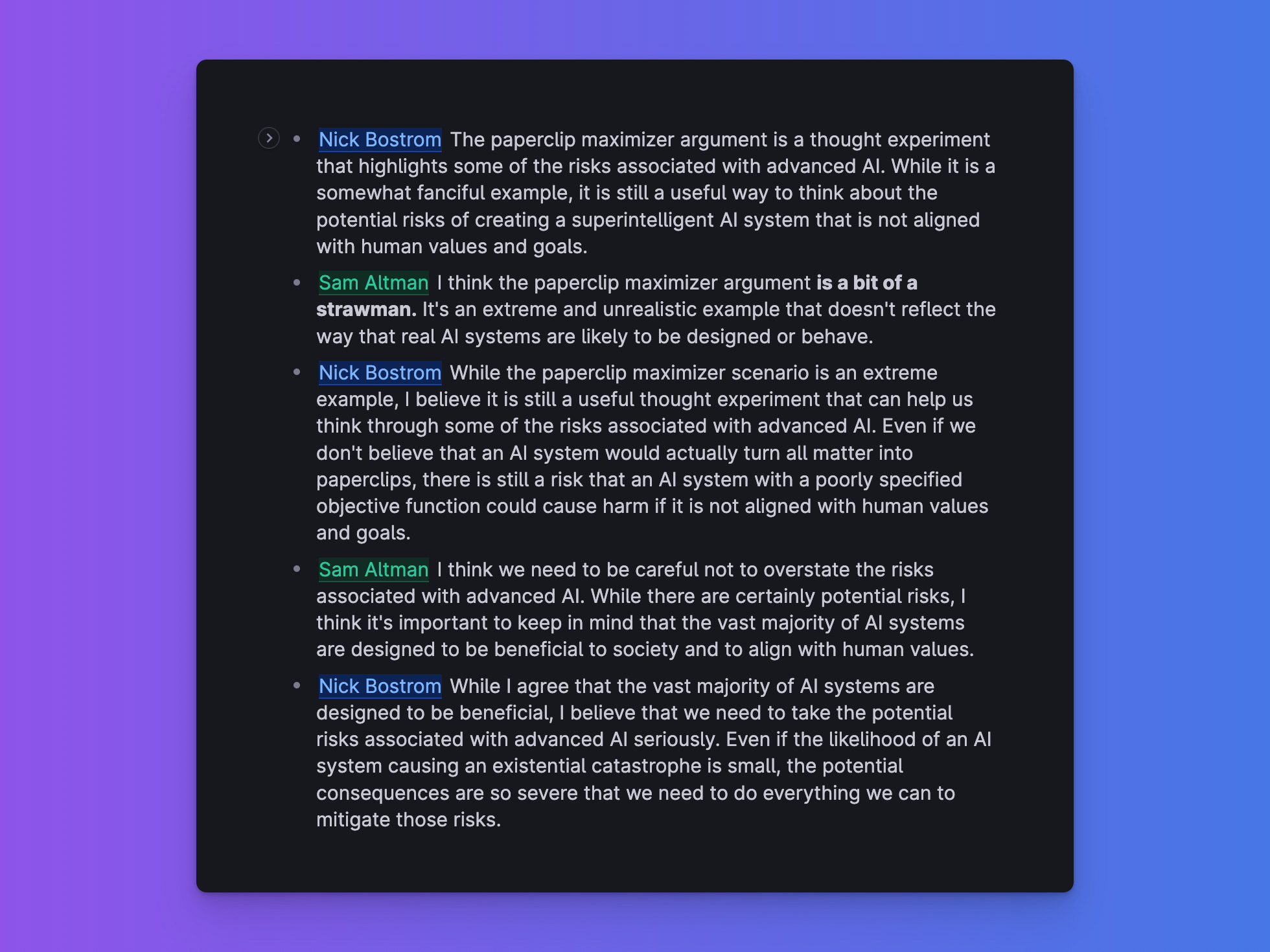

Step 6. Extending the panel of experts

It’s time to add some more external input. Here is a famous views on AGI matrix:

And it's clear who the biggest optimist here: Sam Altman. So let’s invite Sam.

Since I want to explore the Paperclip argument further, how about we do a debate?

Nick Bostrom vs Sam Altman.

Prompt:

Write a debate between Nick Bostrom and Sam Altman on Paperclip argument. No moderator. Focus on points of disagreement.Result:

Nice! It gave me the new perspective. And I really like how Altman applies Strawman argument concept here.

I also want to map this debates on the initial set of concepts.

Prompt:

What concepts from the LIST of AGI related concepts are covered in these debates. LIST: []Result:

Okay, here I want to hit the pause.

I'm really satisfied with how the experiment is going so far.

And the coolest thing — I have all of the output from GPT-4 neatly structured in Tana. So I can process it further and enrich it. Keeping the structure and consistency.

I will continue my research, sharing the most interesting things here and on Twitter.

Tana Template with the full Intuition method will be available next week. I’ll share it on Twitter too. It’s quite universal and can be applied to almost any domain.

BTW Intuition is growing. It went from the small side project to the team of 3 incredible people.

If you are interested in collaboration or want to exchange ideas with us, please reach me here or on Twitter.